As highlighted by Meta CEO Mark Zuckerberg in a latest overview of the influence of AI, Meta is more and more counting on AI-powered programs for extra facets of its inner growth and administration, together with coding, advert focusing on, danger evaluation, and extra.

And that would quickly change into a good greater issue, with Meta reportedly planning to make use of AI for as much as 90% of all of its danger assessments throughout Fb and Instagram, together with all product growth and rule modifications.

As reported by NPR:

“For years, when Meta launched new options for Instagram, WhatsApp and Fb, groups of reviewers evaluated potential dangers: Might it violate customers’ privateness? Might it trigger hurt to minors? Might it worsen the unfold of deceptive or poisonous content material? Till lately, what are recognized inside Meta as privateness and integrity critiques had been performed virtually fully by human evaluators, however now, in line with inner firm paperwork obtained by NPR, as much as 90% of all danger assessments will quickly be automated.”

Which appears doubtlessly problematic, placing a number of belief in machines to guard customers from among the worst facets of on-line interplay.

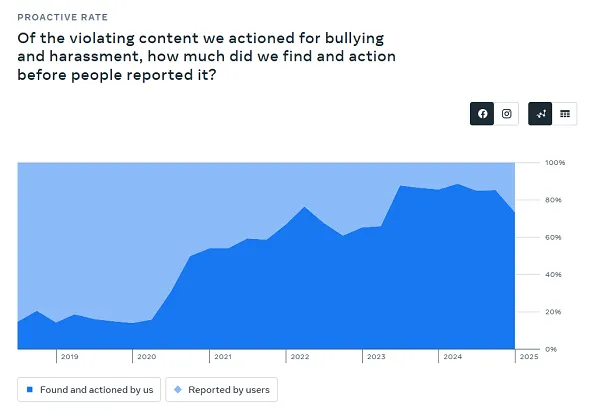

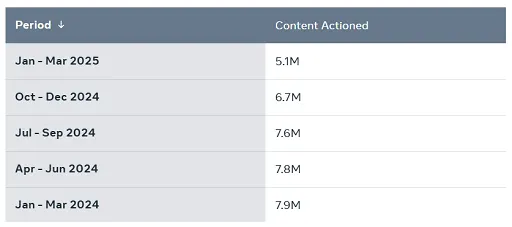

However Meta is assured that its AI programs can deal with such duties, together with moderation, which it showcased in its Transparency Report for Q1, which it printed final week.

Earlier within the yr, Meta introduced that it could be altering its method to “much less extreme” coverage violations, with a view to lowering the quantity of enforcement errors and restrictions.

In altering that method, Meta says that when it finds that its automated programs are making too many errors, it’s now deactivating these programs fully as it really works to enhance them, whereas it’s additionally:

“…eliminating most [content] demotions and requiring better confidence that the content material violates for the remainder. And we’re going to tune our programs to require a a lot increased diploma of confidence earlier than a chunk of content material is taken down.”

So, primarily, Meta’s refining its automated detection programs to make sure that they don’t take away posts too rapidly. And Meta says that, up to now, this has been a hit, leading to a 50% discount in rule enforcement errors.

Which is seemingly a constructive, however then once more, a discount in errors may also imply that extra violative content material is being exhibited to customers in its apps.

Which was additionally mirrored in its enforcement knowledge:

As you may see on this chart, Meta’s automated detection of bullying and harassment on Fb declined by 12% in Q1, which signifies that extra of that content material was getting by means of, due to Meta’s change in method.

Which, on a chart like this, doesn’t seem like a big influence. However in uncooked numbers, that’s a variance of thousands and thousands of violative posts that Meta’s taking quicker motion on, and thousands and thousands of dangerous feedback which can be being proven to customers in its apps on account of this modification.

The influence, then, could possibly be important, however Meta’s seeking to put extra reliance on AI programs to know and implement these guidelines in future, as a way to maximize its efforts on this entrance.

Will that work? Nicely, we don’t know as but, and this is only one side of how Meta’s seeking to combine AI to evaluate and motion its numerous guidelines and insurance policies, to higher shield its billions of customers.

As famous, Zuckerberg has additionally flagged that “someday within the subsequent 12 to 18 months,” most of Meta’s evolving code base might be written by AI.

That’s a extra logical utility of AI processes, in that they’ll replicate code by ingesting huge quantities of knowledge, then offering assessments based mostly on logical matches.

However if you’re speaking about guidelines and insurance policies, and issues that would have a big effect on how customers expertise every app, that looks like a extra dangerous use of AI instruments.

In response to NPR, Meta mentioned that product danger evaluate modifications will nonetheless be overseen by people, and that solely “low-risk selections” are being automated. Besides, it’s a window into the potential future growth of AI, the place automated programs are being relied upon an increasing number of to dictate precise human experiences.

Is that a greater approach ahead on these components?

Perhaps it would find yourself being so, but it surely nonetheless looks like a big danger to take, after we’re speaking about such an enormous scale of potential impacts, if and once they make errors.