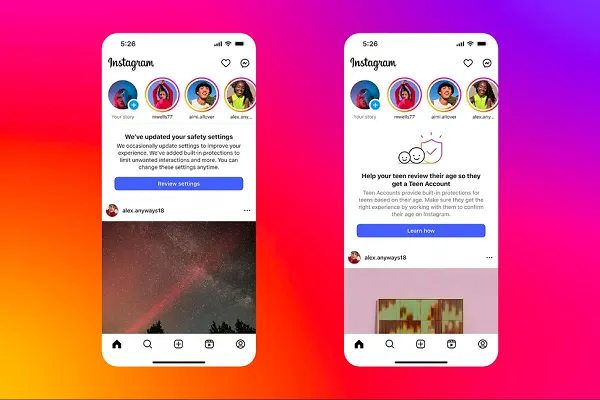

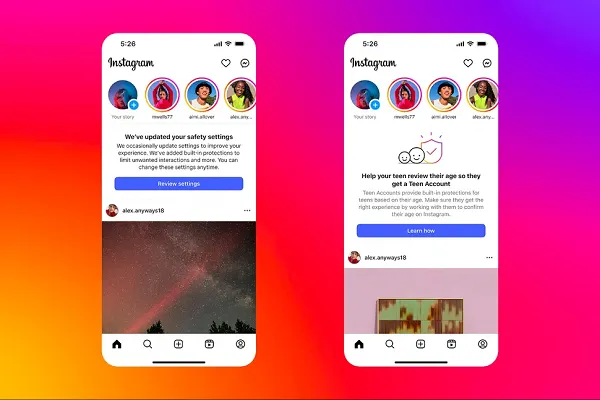

Instagram’s trying to advance its teen safety options, with improved AI detection of account holder age, and an enlargement of its teen accounts to customers in Canada.

As reported by Android Central, Instagram’s up to date consumer detection course of will routinely restrict interactions with sure accounts when it determines that the consumer is below 18, even when the consumer tries to lie about their age by itemizing an grownup start date.

It’s the newest deployment of Meta’s advancing age detection techniques, which make the most of numerous components to find out consumer age, together with who follows you, who you comply with, what content material you work together with, and extra. Meta’s techniques can even correlate birthday well-wishes from different customers to issue into its evaluation.

It’s not an ideal system, and Meta has acknowledged that the method will nonetheless make some errors (customers can have the choice to enchantment and/or appropriate their age if they’re incorrectly restricted). However Meta says that its system is bettering, and studying extra about the important thing concerns which might be more likely to point out consumer age through platform utilization.

Meta will even now default Canadian teenagers below the age of 16 into its superior safety mode, which may solely be deactivated by a father or mother, because it has for U.S. teenagers since final September.

This is a vital focus for the app, as increasingly areas look to implement new restrictions on social media utilization to guard younger customers.

During the last 12 months, a number of European nations, have put their assist behind proposed legal guidelines to chop off younger teenagers from social media apps solely, together with France, Greece and Denmark. Spain is contemplating a 16 year-old entry restriction, whereas Australia and New Zealand are additionally transferring to implement their very own legal guidelines, and Norway is within the strategy of creating its personal laws.

It appears inevitable that some stage of restriction goes to be imposed on teen social media use in lots of areas, however the problem then is how do you implement it, and the way do you guarantee that you could legally maintain platforms to account for upholding their necessities?

As a result of every platform has its personal system for detecting and defending teenagers, and a few, like these new measures from IG, are extra superior than others. However in a authorized sense, enforcement requires requirements, and security benchmarks that may be met by all such approaches.

At current, there’s no common commonplace on age detection, and whereas AI is displaying some promise, numerous different strategies are being examined, together with selfie verification by third occasion suppliers (which poses its personal publicity threat).

In Australia, for instance, which is transferring forward with its personal legal guidelines on teen social media entry, regulators just lately examined 60 completely different age verification approaches, from a spread of distributors, and located that whereas some choices are principally efficient, there will probably be errors, particularly for customers inside two years of 16.

To this point, the Australian authorities hasn’t outlined the precise necessities for social platforms with regard to age checking, with the proposed legal guidelines solely noting that “all affordable steps” are undertaken to take away the accounts of these aged below 16. Because it stands, there received’t be a legally enforceable commonplace for accuracy except it might probably set up a common checking methodology.

Which is why it’s testing so many choices, however once more, the problem of enforcement stays a key obstacle, and can doubtless blunt all potential authorized recourse by authorities the place such legal guidelines are enacted.

Except settlement could be reached on an agreed commonplace strategy, which is why Meta’s use of AI age verification might ultimately set up a brand new trade threshold for such.

It’s bettering its strategy, and driving higher outcomes, and increasing these techniques to extra areas is one other step in the suitable path.