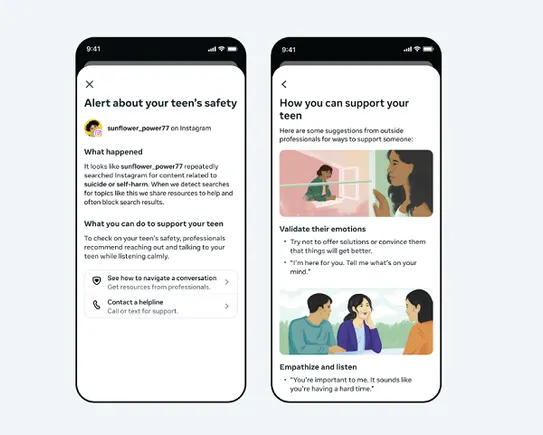

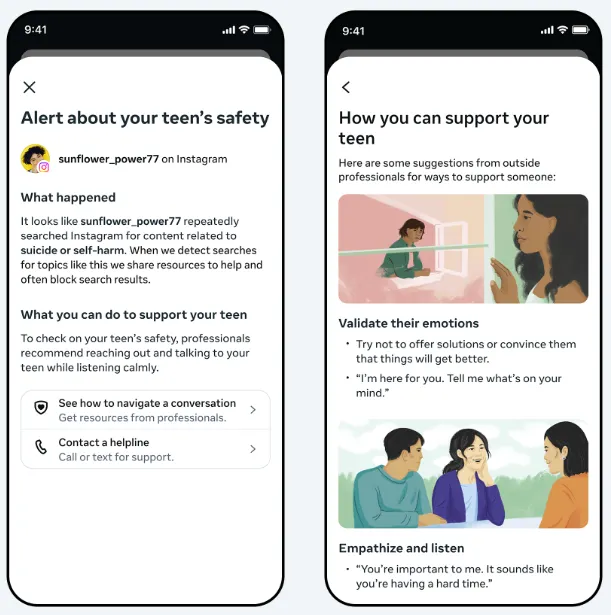

Meta has introduced a new security measure for Instagram on Thursday that can alert mother and father if their teenage youngster repeatedly searches for phrases associated to suicide or self-harm within the app.

The alerts, that are being rolled out to folks within the U.S., Canada, the U.Ok. and Australia starting subsequent week, will ship a push notification to an authorised mother and father’ cellphone. The notification will present an outline of what occurred, together with hyperlinks to sources that may assist mother and father deal with their considerations with their youngsters.

Dad and mom will should be enrolled in Instagram’s Parental Supervision program to qualify for the alerts.

As per Meta: “We perceive how delicate these points are, and the way distressing it could possibly be for a mother or father to obtain an alert like this. The overwhelming majority of teenagers don’t attempt to seek for suicide and self-harm content material on Instagram, and once they do, our coverage is to dam these searches, as an alternative directing them to sources and helplines that may provide assist. These alerts are designed to ensure mother and father are conscious if their teen is repeatedly making an attempt to seek for this content material, and to provide them the sources they should assist their teen.”

Meta mentioned it’s launching these alerts on Instagram first, however it will likely be seeking to deliver them to Meta AI as nicely, as a result of youngsters are more and more asking its synthetic intelligence bot related questions.

“Whereas our AI is already educated to reply safely to teenagers and supply sources on these matters as acceptable, we’re now constructing related parental alerts for sure AI experiences,” Meta mentioned. “These will notify mother and father if a teen makes an attempt to have interaction in sure varieties of conversations associated to suicide or self-harm with our AI.”

The replace comes as Meta faces extra scrutiny over its teen safety measures, with a court docket case underway in California regarding allegations that Meta has pursued a method of progress in any respect prices and ignored the impression of its merchandise on youngsters’s psychological and bodily well being.

The trial, wherein each Meta CEO Mark Zuckerberg and Instagram chief Adam Mosseri have already confronted questioning, stems from allegations that Meta was conscious of teenage security considerations for years earlier than it took motion.

Meta has since carried out a variety of teenage security measures, however the firm might face vital penalties if it seems that Meta delayed shifting on this because of enterprise progress issues.

Both manner, the trial is one other dent within the public persona of the corporate, which already has a poor repute for security and person safety.

Meta could also be hoping that its more moderen efforts on teen safety, together with this new announcement, might assist to paint that view and make sure that mother and father and teenagers really feel protected and guarded in its apps.