AI chatbots are set to return below regulatory scrutiny, and will face new restrictions, because of a brand new probe.

Following experiences of regarding interactions between younger customers and AI-powered chatbots in social apps, the Federal Commerce Fee (FTC) has ordered Meta, OpenAI, Snapchat, X, Google and Character AI to offer extra info on how their AI chatbots perform, as a way to set up whether or not enough security measures have been put in place to guard younger customers from potential hurt.

As per the FTC:

“The FTC inquiry seeks to grasp what steps, if any, firms have taken to guage the security of their chatbots when performing as companions, to restrict the merchandise’ use by and potential destructive results on youngsters and teenagers, and to apprise customers and oldsters of the dangers related to the merchandise.”

As famous, these issues stem from experiences of doubtless regarding interactions between AI chatbots and teenagers, throughout numerous platforms.

For instance, Meta has been accused of permitting its AI chatbots to have interaction in inappropriate conversations with minors, with Meta even encouraging such, because it seeks to maximise its AI instruments.

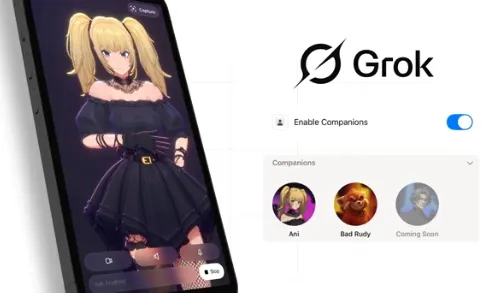

Snapchat’s “My AI” chatbot has additionally come below scrutiny over the way it engages with children within the app, whereas X’s just lately launched AI companions have raised a raft of latest issues as to how folks will develop relationships with these digital entities.

In every of those examples, the platforms have pushed to get these instruments into the palms of customers, as a method to maintain up with the newest AI pattern, and the priority is that security issues could have been neglected within the title of progress.

As a result of we don’t know what the total impacts of such relationships shall be, and the way it will influence any consumer long-term. And that’s prompted not less than one U.S. senator to name for all teenagers to be banned from utilizing AI chatbots fully, which is not less than a part of what’s impressed this new FTC investigation.

The FTC says that will probably be particularly wanting into what actions every firm is taking “to mitigate potential destructive impacts, restrict or limit youngsters’s or teenagers’ use of those platforms, or adjust to the Kids’s On-line Privateness Safety Act Rule.”

The FTC shall be wanting into numerous points, together with improvement and security exams, to make sure that all cheap measures are being taken to reduce potential hurt inside this new wave of AI-powered instruments.

And it’ll be fascinating to see what the FTC finally ends up recommending, as a result of so far, the Trump Administration has leaned in direction of progress over course of in AI improvement.

In its just lately launched AI motion plan, the White Home put a particular give attention to eliminating crimson tape and authorities regulation, as a way to make sure that American firms are in a position to prepared the ground on AI improvement. Which may lengthen to the FTC, and it’ll be fascinating to see whether or not the regulator is ready to implement restrictions because of this new push.

Nevertheless it is a vital consideration, as a result of like social media earlier than it, I get the impression that we’re going to be wanting again on AI bots in a decade or so and questioning how we are able to limit their use to guard children.

However by then, after all, will probably be too late. Which is why it’s essential that the FTC does take this motion now, and that it is ready to implement new insurance policies.