Following a report from Wired that X has been inundated with misinformation for the reason that starting of the Iran battle, X introduced that it’s revising its creator income share insurance policies to stop manipulation.

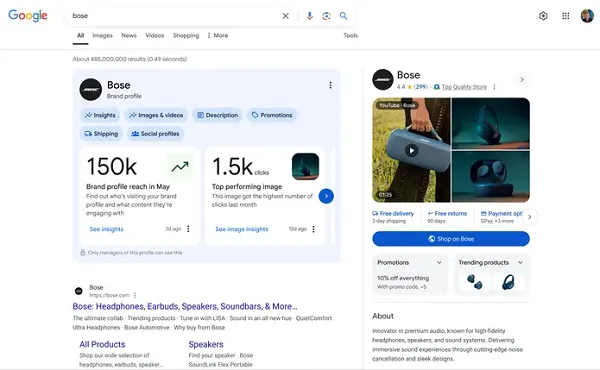

Nikita Bier, X’s head of product, introduced in a March 3 submit on X that the platform is particularly seeking to handle synthetic intelligence-generated deepfakes associated to the battle to be able to defend the integrity of the platform.

As per Bier: “Throughout instances of warfare, it’s essential that folks have entry to genuine data on the bottom. With at present’s AI applied sciences, it’s trivial to create content material that may mislead individuals. Beginning now, customers who submit AI-generated movies of an armed battle – with out including a disclosure that it was made with AI – might be suspended from Creator Income Sharing for 90 days.”

Bier mentioned that additional violations will lead to a everlasting suspension from this system.

“This might be flagged to us by any submit with a Neighborhood Observe or if the content material accommodates metadata (or different alerts) from generative AI instruments,” Bier mentioned.

Wired reported on Feb. 28 that lots of of posts on X, some with hundreds of thousands of views, promoted deceptive claims in regards to the battle.

As Wired defined: “In some instances, alleged video footage of the assault shared in posts on X are literally months or years outdated. In a number of posts, video footage of obvious assaults have been attributed to incorrect areas. Quite a few photos shared on X look like altered or generated with AI. Different posts try to cross off online game footage as scenes from the battle.”

And with X’s creator income share program incentivizing customers to submit content material that drives extra views and engagement, there’s a transparent motivation for creators to share incendiary posts, which could possibly be a part of the rationale X is seeing an enormous inflow of misinformation.

Clearly, X agrees this can be a drawback, which is why it’s altering the foundations of the initiative, although the important thing focus right here is AI-generated content material, not misinformation normally.

Will that assist to deal with the unfold of false claims, and faux footage from the battlefield? It’s laborious to say, as there are different motivations for making false claims, as a result of state-based actors additionally use X and different social platforms to sway views.

However the announcement does present that X has acknowledged this as an issue and is working to deal with the unfold of false stories a few main information occasion.

Although, in distinction, it’s fascinating to notice that X hasn’t enacted the identical degree of enforcement for AI-generated fakes of different kinds. Like, say, photos of people that’ve been undressed by its personal Grok app.

Besides, it’s a optimistic signal that X is taking motion to fight no less than one type of AI-generated misinformation.